In this continuation of our conversation, LP’s most tenured Creative Director Eric Mika explores how we can rediscover the lost meaning of objects that express their function through their form, shares the technologies he thinks hold the most untapped potential, and gives us a sneak peek into the studio’s latest work with IBM. Read Part I of our conversation with Eric here.

Storymaking Part II

Continuing Our Conversation With Creative Director Eric Mika

In your keynote at MIT’s Hacking Arts conference, you pointed out that “our tools have lost specificity, most are now trapped inside tiny rectangles.” Do you envision a return to tools whose physical form matches their use?

I picked up the lament about tiny rectangles from John Ryan, a former Director of Interaction Design at Local Projects. The gist is that transposing pencils, flashlights, cameras, compasses, clocks, etc. into touchscreen abstractions has sapped expressive objects from our everyday experiences. This has been compounded by the mass regression towards charmless flat design over the last seven years. Form and function have never been more estranged!

Of course convenience has a charm all its own, and I’d definitely sit out a sudden wave of neo-Luddite phone-breaking. The mission here isn’t to bring back flashlights, instead I think the tangibility void bolsters the case for creating spaces that offer an escape from the tiny rectangle — spaces where you can dwell in an idea.

Something I love about the work we do at Local Projects is that we often have the opportunity to think of an entire space as an interface, with a range of affordances — both physical and digital.

We can design the software and space in unison to create an experience tuned to build a visitor’s understanding of a complex idea through, once again, exploration rather than exposition.

Designing for museums and public spaces is uniquely challenging on a number of fronts: First, almost all of our users are first-time users. The learning curve has to be as flat as possible, and if there’s any slope to it, the climb has to feel like fun instead of work. Second, we’re accountable when visitors turn existential and ask “why am I here”, “why this space”, and “why isn’t this just an app”?

A return to tangibility offers an answer to these challenges. Interfaces that express constraints through their form are quickly understood. Integrating these interfaces well with the surrounding space opens up opportunities for collaboration and (actual) social interactions between visitors that a tiny rectangle could never abide.

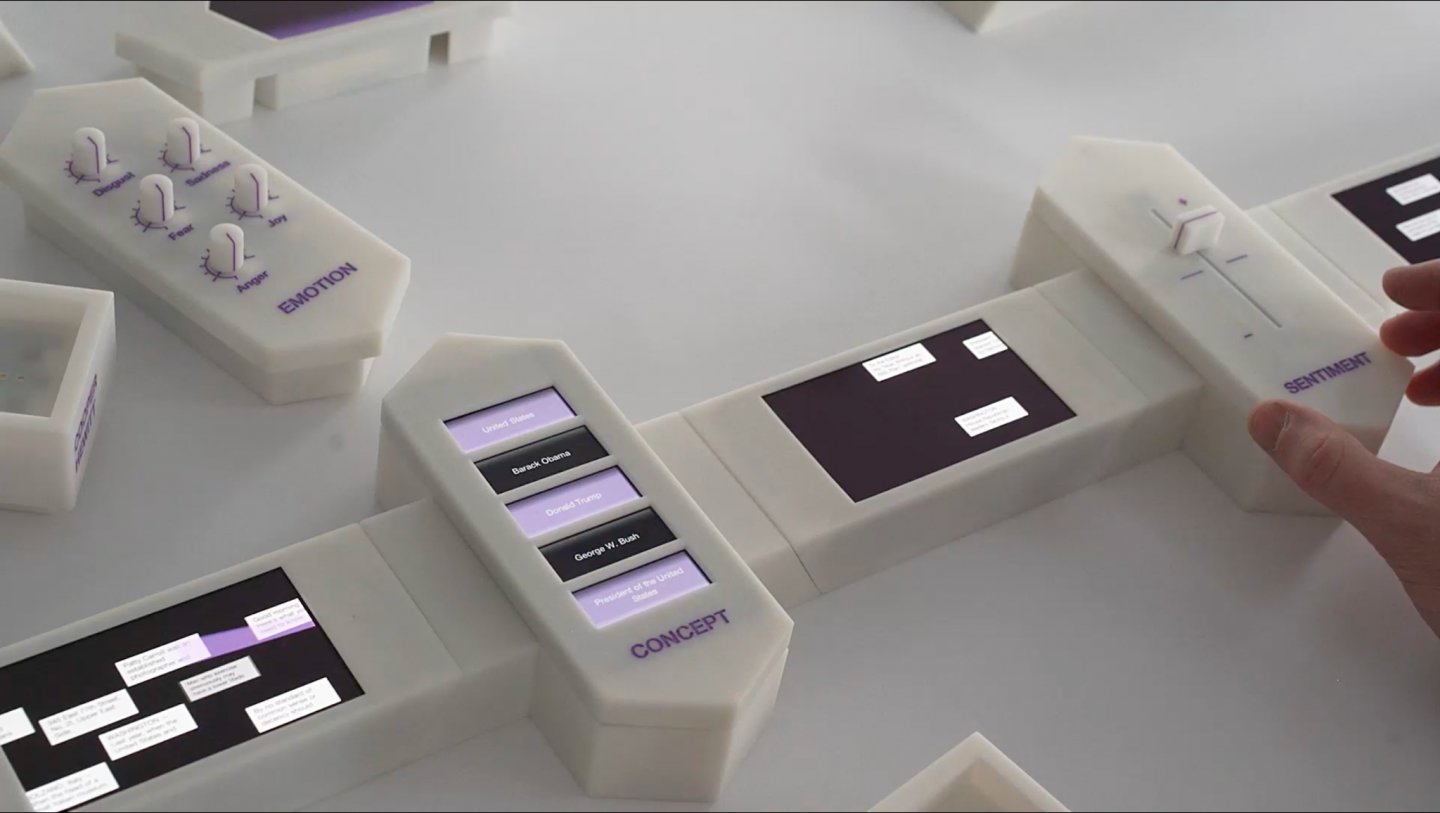

One example to make this all a bit more concrete: A recent brief asked us to design something to explain how machine learning algorithms may be applied and combined to extract insights from large collections of images and text. We ended up designing and fabricating a set of palm-sized blocks, each with an embedded screen and magnetic connectors on both ends.

(Yes, technically it’s a tiny rectangle — the point here is not to forsake the screen, but to cast it into new contexts.) Each block represented a different data source or algorithm. Some included sliders and dials for fine-tuning. When snapped together, streams of data — images, news headlines — flowed freely from block to block, filtered and routed according to the rules of each block’s algorithm. Since it took just a few seconds to rearrange the chain and see the data change, the block approach allowed for quick and hands-on iteration towards an intuitive understanding of an otherwise dense subject.

We know your team’s work with IBM is a little under wraps, but what can you tell us about that project? Where are you excited to see IBM go next?

Our latest collaboration with IBM is centered on creating new concepts and tools to adapt their global client experience centers to a hybrid model. We’re inventing new ways for IBM to collaborate and solve problems with their clients that scale seamlessly from a completely distributed online experience to purpose-built in-person workspaces.

The digital half of the hybrid equation carries the challenge of rethinking how online collaboration works. The era of remote work has forced our familiarity with a range of off-the-shelf tools, and, candidly, there’s much room for improvement and specialization. Currently, online collaboration seems to take the form of either Zoom-flavored death by PowerPoint, or whiteboard-inspired free-for-alls in tools like Mural. The former is drudgery, the latter has a steep learning curve and can quickly devolve into chaos.

We think there’s a space between these two extremes to create a real-time collaborative online experience combining the narrative coherence of a linear presentation with key moments of interactivity and group engagement tailored to the exact conversation IBM wants to have with their clients.

Ultimately, we want these advantages to combine into a client experience that will act as a differentiator for IBM, and shift remote collaboration from something fatigue-inducing into something clear, focused, and productive.

It’s been an exciting project to work on because there aren’t yet canonical solutions to many of the core design challenges surrounding real-time online collaboration. The team gets to invent from the ground up.

What emerging technologies or trends are you excited about right now?

Many of our clients have world-class collections of art or other artifacts, and I think there are significant opportunities to apply machine learning techniques to index these collections in ways that unlock new modes of interaction and exploration.

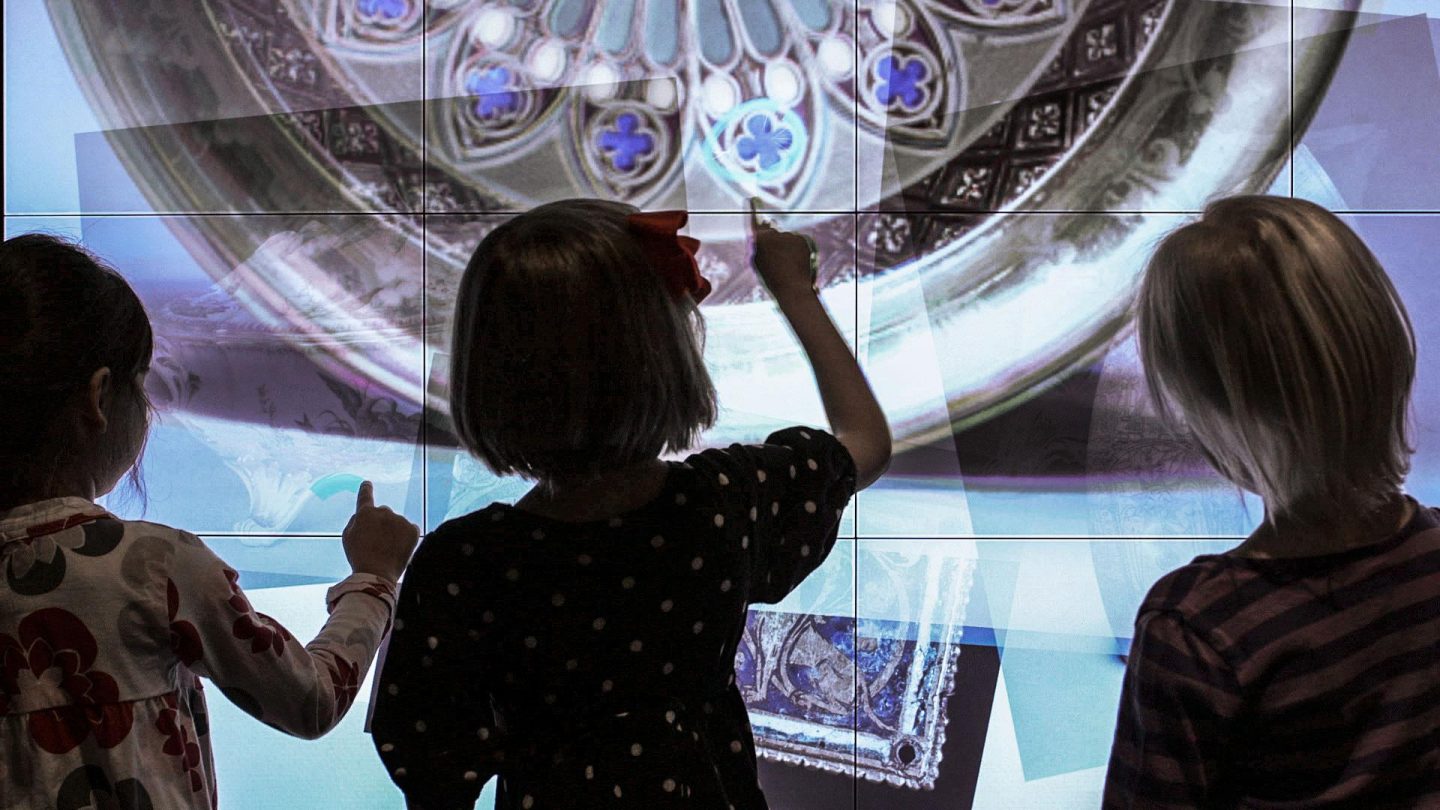

To put this opportunity into context, we can look back at an exhibit titled “Line and Shape” which we created for Cleveland Museum of Art’s Gallery One back in 2012 in collaboration with artist Zach Lieberman. This interactive allowed visitors to draw a shape with their finger on a large touchscreen — a circle, a squiggle, anything — and then immediately see an artwork from the museum’s collection containing a similar shape appear. Behind the scenes, we accomplished this effect by painstakingly tracing the key contours in hundreds of artworks by hand, which were later searched by an algorithm to match the visitors’ squiggles. We looked for ways to automate the artwork tracing process, but nothing could quite achieve the quality of line we needed back then. If we wanted to recreate this exhibit in 2021, we’d likely be able to successfully delegate the tracing task to a machine learning powered contour-finding algorithm, which would allow us to easily scale the experience

from hundreds of images to thousands — and by automating this aspect of the exhibit’s content pipeline, the library of images could be easily expanded as the museum’s collection grows.

Beyond extracting metadata en masse, I think there’s also significant potential in using machine learning techniques to enable a kind of leveraged creativity — where small gestures from the visitor create dramatic visual effects. A good example of this type of interaction is Planet Word Museum’s recently-launched “Word Worlds” gallery, where visitors can virtually paint a room-sized projected landscape with one of a dozen adjectives like “verdant” and “surreal” to see how a word transforms the scene.

Our custom software achieves this effect by selectively revealing many layers of landscape rendered in advance by our design team, which means there’s ultimately a hard limit on the number of words visitors can paint with and the number of scenes that can be created.

It’s easy to imagine a machine-learning powered approach to this experience that leverages recent progress in generative machine learning techniques to incorporate many additional words and scenes without exponentiating the design team’s workload. Our experiments with this generative approach during prototyping revealed that the algorithms’ output is still a bit too dream-like — every scene ends up with a mandatory coat of “surreal” paint. It wasn’t quite the right approach for Word Worlds, but the quality of the visual output from these algorithms is improving rapidly thanks to massive investments in machine-learning research in academia and industry. We’re hoping to find the right project to further explore their creative potential.

Augmented reality bears the burden of being a buzzword, but recent advances in software and hardware are finally starting to make good on a decade’s worth of promises. Making physical space play a meaningful role in interactive experiences is central to Local Projects’ design approach, and augmented reality opens up a range of opportunities for activating a space and the objects within.

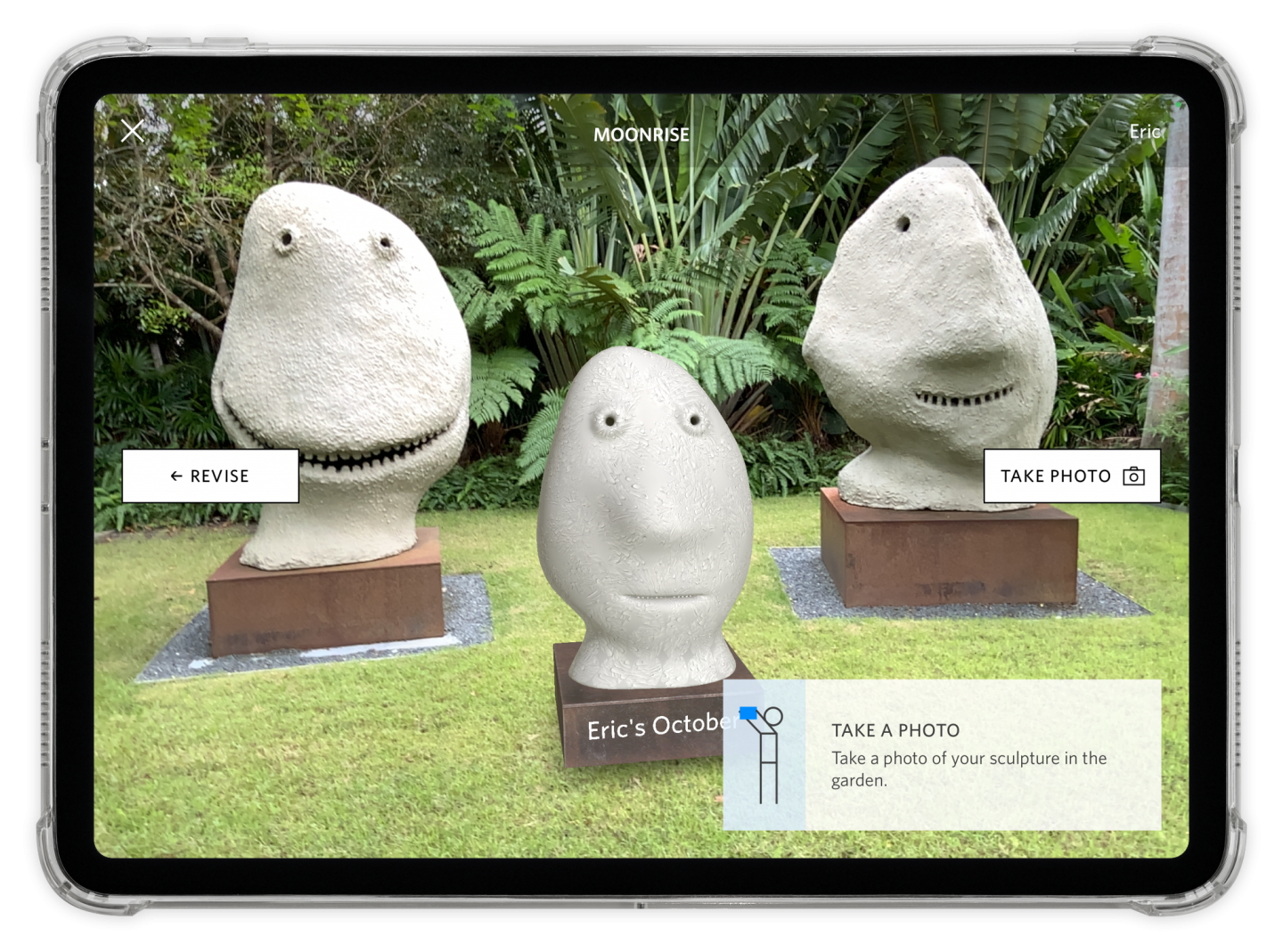

I’m particularly interested in the potential for augmented reality to enable a layer of shared visitor-created content in museums — a kind of community meta-gallery right alongside the physical collection. We explored this idea in the Norton Art+ app with an interaction that allows you to create and install an artwork of your own in the sculpture garden.

You’re not limited to seeing just your own work in-situ, you also see the sculptural creations from other visitors appear in the garden via AR. I risk getting ahead of myself, but I think there’s something particularly welcoming — and even democratizing — in inviting visitors’ creations into the museum in this way.

Exploring this idea further, another interaction in Norton Art+ prompts visitors to collectively contribute AR elements to an artwork’s composition. Once again, visitors don’t just see their own contributions, they also see elements placed by other visitors. This opens a visual dialog not just between the visitor and the artwork, but also between visitors. This level of intervention — even if it’s only happening in AR — definitely requires a degree of open-mindedness from both the institution and the artist, but I think it’s worth the effort to inspire young visitors and to cultivate a sense of permeability and community in the space.

There are still technical challenges in scaling this idea from single galleries to entire museums, but progress is rapid and it should soon be feasible to create a spatially consistent layer of virtual content across many thousands of square feet. I hope to one day see many institutions incorporate a layer of community-created artworks in AR — and I’d love to talk about the possibilities with anyone similarly inspired.

Thanks for reading. Want to get in touch with Eric or learn more about Local Projects? Reach out to us at info@localprojects.com!